The Control Stack: Consensus, Authority, and Roles

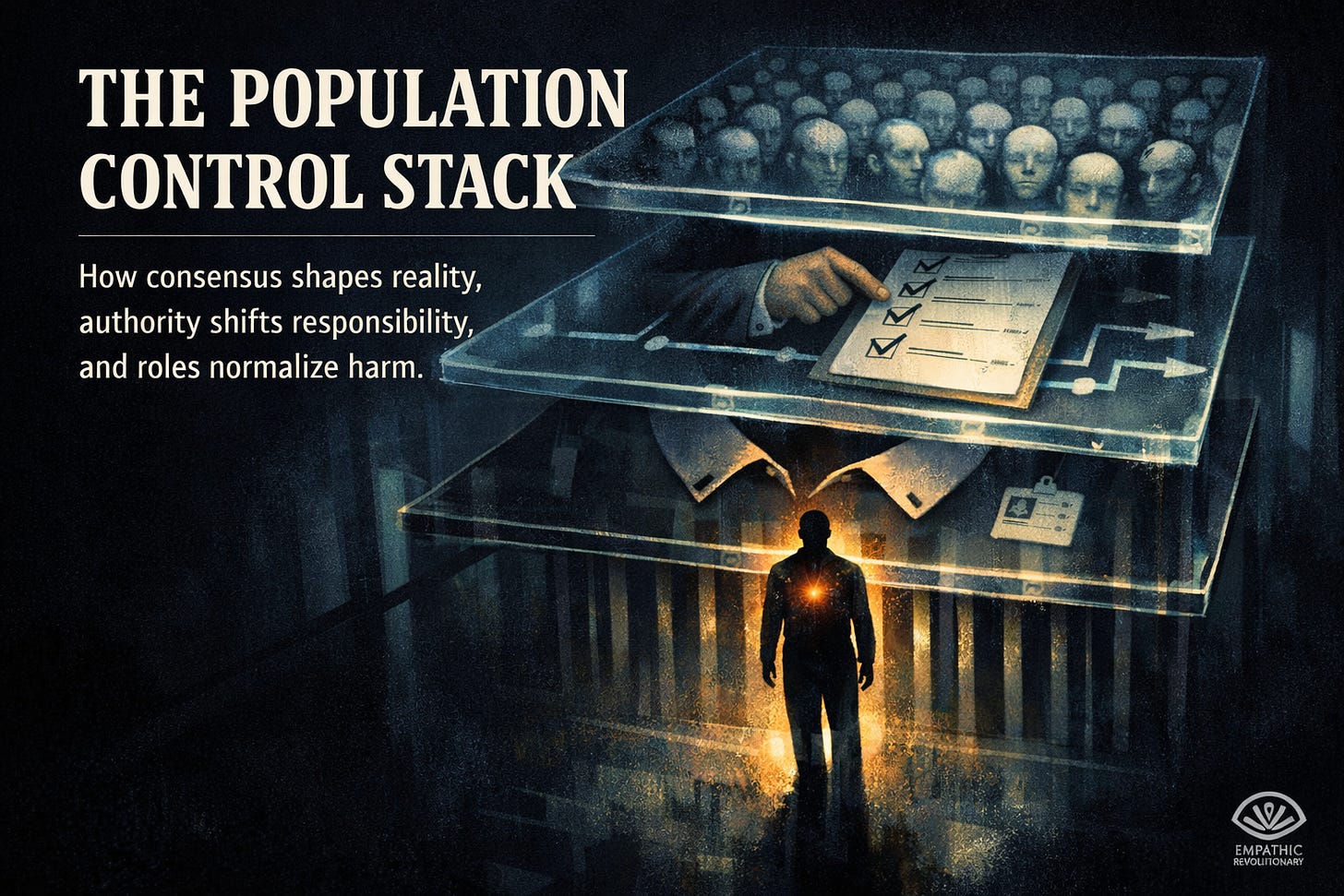

The Population Control Stack

How consensus shapes reality, authority shifts responsibility, and roles normalize harm.

Conformity, obedience, and roles—viewed as a system.

When control works smoothly, consensus shapes what feels real, authority shifts responsibility, and roles shape what becomes normal.

Most of us believe we would spot a dangerous system right away.

In everyday life, control often looks normal. It moves through politeness, routine, and the quiet pressure to keep things comfortable. It hides in phrases like “I’m just doing my job,” and in the way a role narrows what you feel allowed to notice.

Three well-known studies in social psychology offer a practical systems model of how conformity, obedience, and authority spread through groups.

Here, systems means patterns created by relationships, incentives, and shared social cues.

1) Asch: How Group Agreement Can Bend What Feels Real

In Solomon Asch’s conformity experiments in the 1950s, a participant sat with a small group and answered a simple visual question: which line matches another line’s length? The task was easy.

The manipulation was social. The other people in the room were part of the study, and on certain rounds they all gave the same answer even though it was obviously wrong.

Many participants went along at least once.

It is easy to call this weakness, but the mechanism is more precise: people use other people as reality-checking devices. When everyone around you sounds confident, the social signal gets loud—disagreement makes you feel like you are the problem.

From a systems perspective, Asch reveals a reinforcing loop:

The group performs certainty.

That performance creates pressure to agree.

Agreement makes the group look even more certain to the next person.

This loop does not require threats. Social discomfort supplies the pressure.

Two kinds of pressure operate at the same time:

Normative pressure (belonging pressure): “I do not want to stand out.”

Informational pressure (reality pressure): “Maybe I missed something.”

That is why the appearance of consensus matters inside institutions. The point often goes beyond persuasion. It is about shrinking what feels safe, reasonable, and normal to say. Once everyone agrees, dissent feels risky—and starts to feel irrational.

A key detail from Asch is how fragile unanimity is. When even one other person dissents, conformity drops sharply. The reason is structural: the system loses its main weapon, the feeling that disagreement means you are alone.

Practical takeaway: Reducing conformity rarely requires a majority. Visible permission to disagree changes the room.

2) Milgram: How Authority Can Move Responsibility Off Your Shoulders

Stanley Milgram’s obedience studies in the early 1960s are famous because they show how far ordinary people can go under pressure from authority. Participants were told by an experimenter to deliver what they believed were electric shocks to another person after wrong answers. The shocks were fake, but participants believed the pain was real. The ‘Learner’ protested, pleaded, and eventually went silent.

Many participants continued much longer than they expected they ever would.

Milgram is often summarized as “humans can do terrible things.” That is true, but it misses the sharper systems lesson: authority can change where responsibility feels like it belongs.

A system does not need to convince you that harm is good. It can convince you that harm is not really yours, because you are acting as an instrument instead of as the author.

Milgram’s setup shows how structure does the work:

Legitimacy cues: the setting signals expertise and authority (lab, title, credentials).

Procedure framing: the task sounds like routine (“the protocol”).

Gradual escalation: each step feels small, even when the overall path becomes extreme.

Social cost of refusal: stopping creates conflict, awkwardness, and disapproval.

Language is one of the strongest tools. Authority provides scripts that dissolve moral agency:

“The experiment requires that you continue.”

“Please go on.”

“I take responsibility.”

These lines do not physically force anyone. They make the action feel less like a personal choice.

Milgram also points to conditions that reduce obedience: authority that looks uncertain or distant, peers who refuse, a victim who is close and clearly human, and situations where responsibility is explicit and cannot be handed off.

Practical takeaway: Preventing large-scale harm requires systems where responsibility stays clear, traceable, and personal.

3) Stanford Prison: How Roles and Environments Can Turn Into Behavior Engines

The Stanford Prison Experiment in 1971 is widely cited and heavily criticized. Participants were placed in a simulated prison and randomly assigned to be “guards” or “prisoners.” The situation escalated into humiliation and distress, and the study ended early.

As science, the study is compromised. The ethics were unacceptable, and later reporting suggests some participants may have been coached toward specific behaviors.

Even with those limits, a broader principle shows up in real institutions every day: roles and environments can reliably shape behavior, especially when power is uneven and oversight is weak.

Roles operate like compression. They narrow attention and reshape identity.

A role defines what counts as a problem.

A role defines what success looks like.

A role defines what can be ignored without consequence.

Once you act from a role, your mind works to make your behavior feel coherent. People rationalize. People adjust their moral language to fit what they are doing.

In systems terms, behavior and identity create a feedback loop: actions shape self-story, and self-story then justifies the next action.

Combine role power with isolation, dehumanization, and weak accountability, and degrading behavior becomes easier to produce and harder to stop. The outcome becomes predictable because the environment rewards certain actions and shields them from consequences.

Practical takeaway: A setting that makes cruelty easy and accountability hard will produce cruelty over time, regardless of who enters.

The Control Stack: A Simple Systems Map

Taken together, these studies form a layered model of social control:

Consensus pressure shapes what feels real (Asch).

Authority pressure shapes who feels responsible (Milgram).

Role and environment pressure shapes what behavior becomes normal (Stanford Prison).

This is why harmful systems can feel calm from the inside. Each layer lowers the friction of compliance:

consensus reduces doubt

procedure reduces guilt

roles reduce empathy

distance reduces felt consequence

When these layers line up, people often experience themselves as following the process, maintaining standards, or “doing the job,” rather than making a moral choice.

Control works best when it converts moral questions into operational questions.

What Resistance Looks Like

If systems have power, responses must focus on conditions, incentives, and design. Personal courage matters, and structural leverage matters more.

These moves follow directly from the three studies:

Break the illusion of unanimity early; one visible dissenter changes the social math.

Slow escalation; add checkpoints between small steps and irreversible harm.

Make responsibility explicit; replace “the process” with “who decided, and why.”

Shorten chains of command; long chains make it easier to outsource guilt.

Reduce distance from consequences; human contact beats abstract metrics.

Build oversight that resists capture; accountability must be independent to work.

Protect the “difficult” person; early warnings often sound disloyal inside rigid systems.

The deeper message is not that humans are weak. Humans are highly responsive to cues, incentives, and group dynamics. Those traits make cooperation possible, and those same traits can make domination scalable.

Once you see the mechanism, the most useful question changes:

What kind of environment am I participating in, and what behaviors does it quietly reward?

Leverage lives inside that question.